Please note that the recommended version of Scilab is 2026.1.0. This page might be outdated.

See the recommended documentation of this function

Runge-Kutta 4(5)

Runge-Kutta is a numerical solver providing an efficient explicit method to solve Ordinary Differential Equations (ODEs) Initial Value Problems.

Description

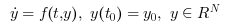

Called by xcos, Runge-Kutta is a numerical solver providing an efficient fixed-size step method to solve Initial Value Problems of the form:

CVode and IDA use variable-size steps for the integration.

A drawback of that is the unpredictable computation time. With Runge-Kutta, we do not adapt to the complexity of the problem, but we guarantee a stable computation time.

As of now, this method is explicit, so it is not concerned with Newton or Functional iterations, and not advised for stiff problems.

It is an enhancement of the Euler method, which approximates yn+1 by truncating the Taylor expansion.

By convention, to use fixed-size steps, the program first computes a fitting h that approaches the simulation parameter max step size.

An important difference of Runge-Kutta with the previous methods is that it computes up to the fourth derivative of y, while the others only use linear combinations of y and y'.

Here, the next value is determined by the present value yn plus the weighted average of four increments, where each increment is the product of the size of the interval, h, and an estimated slope specified by the function f(t,y):

- k1 is the increment based on the slope at the beginning of the interval, using yn (Euler's method),

- k2 is the increment based on the slope at the midpoint of the interval, using yn + h*k1/2 ,

- k3 is again the increment based on the slope at the midpoint, but now using yn + h*k2/2

- k4 is the increment based on the slope at the end of the interval, using yn + h*k3

We can see that with the ki, we progress in the derivatives of yn . So in k4, we are approximating y(4)n , thus making an error in O(h5) .

So the total error is number of steps * O(h5) . And since number of steps = interval size / h by definition, the total error is in O(h4) .

That error analysis baptized the method Runge-Kutta 4(5), O(h5) per step, O(h4) in total.

Although the solver works fine for max step size up to 10-3 , rounding errors sometimes come into play as we approach 4*10-4 . Indeed, the interval splitting cannot be done properly and we get capricious results.

Examples

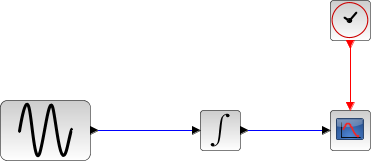

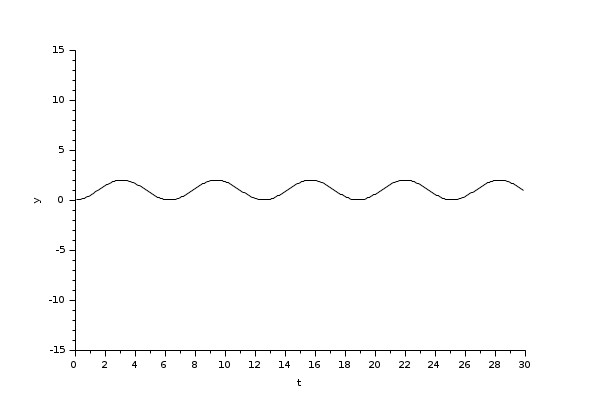

The integral block returns its continuous state, we can evaluate it with Runge-Kutta by running the example:

// Import the diagram and set the ending time loadScicos(); loadXcosLibs(); importXcosDiagram("SCI/modules/xcos/examples/solvers/ODE_Example.zcos"); scs_m.props.tf = 5000; // Select the solver Runge-Kutta and set the precision scs_m.props.tol(6) = 6; scs_m.props.tol(7) = 10^-2; // Start the timer, launch the simulation and display time tic(); try xcos_simulate(scs_m, 4); catch disp(lasterror()); end t = toc(); disp(t, "Time for Runge-Kutta:");

The Scilab console displays:

Time for Runge-Kutta:

8.906

Now, in the following script, we compare the time difference between Runge-Kutta and CVode by running the example with the five solvers in turn: Open the script

Time for BDF / Newton:

18.894

Time for BDF / Functional:

18.382

Time for Adams / Newton:

10.368

Time for Adams / Functional:

9.815

Time for Runge-Kutta:

4.743

These results show that on a nonstiff problem, for relatively same precision required and forcing the same step size, Runge-Kutta is faster.

Variable-size step ODE solvers are not appropriate for deterministic real-time applications because the computational overhead of taking a time step varies over the course of an application.

See also

Bibliography

History

| Version | Description |

| 5.4.1 | Runge-Kutta 4(5) solver added |

| Report an issue | ||

| << CVode | Solvers | Dormand-Prince 4(5) >> |